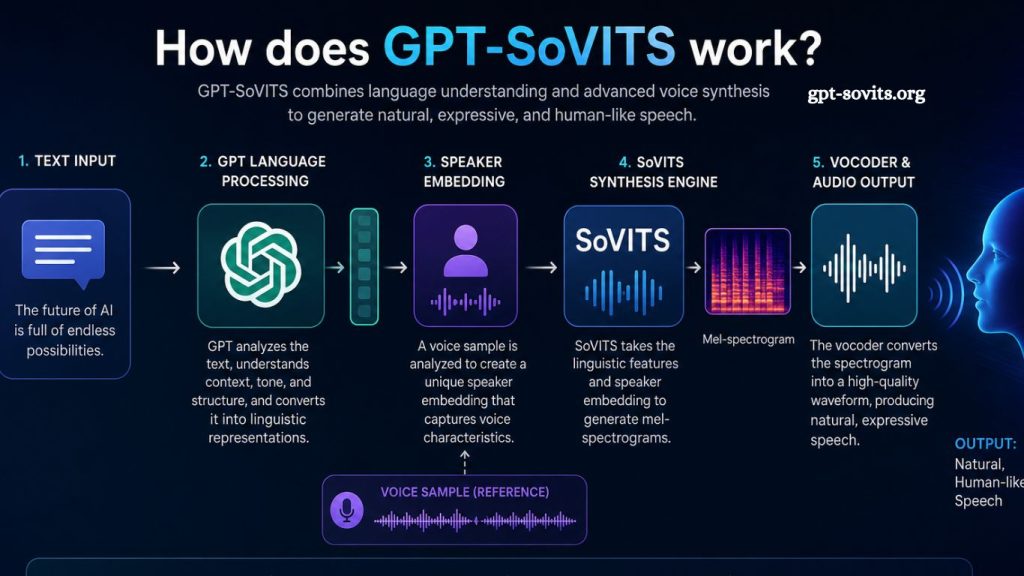

Voice AI technology has moved beyond simple text-to-speech systems. Modern models now generate expressive, natural, and human-like speech with impressive accuracy. GPT-SoVITS stands among the advanced frameworks designed to combine generative language modeling with high-quality voice synthesis. Understanding how GPT-SoVITS works helps clarify why it delivers realistic speech cloning and strong audio performance in modern AI applications.

Understanding GPT-SoVITS in Simple Terms

GPT-SoVITS represents a hybrid architecture that merges two powerful AI systems: GPT-based language modeling and SoVITS voice synthesis. GPT handles text understanding and sequence generation while SoVITS focuses on converting linguistic representations into natural-sounding speech.

Language processing begins at the GPT stage. Voice generation completes at the SoVITS stage. The combined operation allows the system to take text input and produce expressive spoken output that closely aligns with human speech in tone, rhythm, and pronunciation.

Read More: GPT-SoVITS Explained: Meaning, Features, and Use Cases

Core Components Behind GPT-SoVITS

GPT-Based Language Modeling

GPT models function as deep neural networks trained on massive text datasets. These models learn grammar, semantics, and contextual relationships between words. In GPT-SoVITS, this component interprets input text and converts it into intermediate representations suitable for speech synthesis.

Text transformation does not rely only on word prediction. Context awareness plays a key role. Emotional cues, punctuation, and sentence structure influence how the model prepares data for the next stage.

SoVITS Speech Synthesis Engine

SoVITS stands for Soft VITS, an improved version of Variational Inference Text-to-Speech systems. This component focuses on producing high-fidelity audio output. It takes processed linguistic features from the GPT layer and transforms them into waveform audio.

Voice cloning capability represents a major strength of SoVITS. A small sample of a target voice allows the system to reproduce tone, pitch, and speaking style with high similarity. Neural vocoders enhance audio clarity and realism during this stage.

Voice Embedding System

Voice embedding enables the model to understand speaker identity. Each voice gets mapped into a numerical representation. These embeddings help the system maintain a consistent tone across generated speech.

Consistency remains essential in applications like virtual assistants, dubbing, and personalized voice systems. GPT-SoVITS uses embedding vectors to ensure stable voice reproduction even across long sentences.

How GPT-SoVITS Processes Input Step by Step

Step 1: Text Input Analysis

System receives raw text as input. The GPT module breaks down sentences into tokens. Each token represents a word or subword unit. Context between tokens gets analyzed to preserve meaning.

Sentence structure influences prosody decisions. Questions, exclamations, and pauses receive different interpretations during processing.

Step 2: Linguistic Feature Generation

Processed tokens convert into linguistic features. These features include phonetic structures, stress patterns, and timing information. GPT ensures semantic accuracy while preparing speech-friendly representation.

This stage bridges natural language understanding with acoustic preparation. Output remains non-audio but fully structured for speech synthesis.

Step 3: Speaker Conditioning

Voice selection activates at this stage. The system applies speaker embeddings to define the identity of the generated speech. These embeddings control vocal characteristics such as tone, gender, accent, and speaking rhythm.

Voice consistency improves through conditioning vectors that guide SoVITS during synthesis.

Step 4: Acoustic Conversion by SoVITS

SoVITS converts prepared features into mel-spectrograms. These spectrograms represent sound frequency patterns over time. Neural networks map these patterns into realistic audio waveforms.

Deep learning models enhance natural transitions between phonemes. Speech sounds smoother rather than robotic due to improved waveform generation techniques.

Step 5: Audio Output Generation

Final waveform passes through a vocoder. Vocoder refines audio clarity and removes distortions. Output becomes high-quality speech ready for playback or streaming.

Final voice output reflects the original speaker’s characteristics, along with emotional and contextual cues from the input text.

Key Features of GPT-SoVITS

High-Quality Voice Cloning

The system reproduces voices with minimal training data. Even short audio samples can produce accurate voice replication. This capability supports content creation, dubbing, and personalized assistants.

Natural Speech Expression

Model captures rhythm, intonation, and emotional tone. Speech sounds fluid rather than mechanical. Proper handling of pauses and emphasis improves listener experience.

Multi-Language Support

Architecture supports multiple languages depending on the training data. Language flexibility enables global applications across education, entertainment, and communication.

Low Data Requirement

Training efficiency stands out as a major advantage. SoVITS requires less voice data compared to traditional TTS systems. GPT integration improves generalization ability across unseen text.

Applications of GPT-SoVITS

Content Creation Industry

Voiceovers for videos, podcasts, and audiobooks benefit from fast generation. Creators produce high-quality narration without hiring voice actors for every project.

Virtual Assistants

AI assistants use GPT-SoVITS for more human-like interaction. Improved speech quality enhances user engagement and trust in digital assistants.

Gaming and Animation

Game developers integrate voice cloning for dynamic character dialogue. Characters respond with natural voice variation based on storyline context.

Accessibility Tools

Text-to-speech support improves accessibility for visually impaired users. Natural speech output increases comprehension and usability.

Advantages Over Traditional TTS Systems

Older text-to-speech systems rely heavily on rule-based synthesis. These systems often sound robotic and lack emotional depth. GPT-SoVITS replaces rigid structures with deep learning models.

Improved neural architectures allow better adaptation to context. Emotional cues become part of speech generation instead of static additions.

Voice consistency also improves significantly. Traditional systems struggle with long sentences or varied tone, while GPT-SoVITS maintains a stable voice identity.

Technical Challenges in GPT-SoVITS

Computational Demand

High-quality synthesis requires strong computing power. Training and inference may demand GPU acceleration for real-time performance.

Data Sensitivity

Voice cloning raises ethical concerns when used without consent. Proper safeguards remain necessary to prevent misuse.

Model Complexity

Integration of GPT and SoVITS increases system complexity. Optimization is essential for reducing latency in real-world applications.

Frequently Asked Questions

What is GPT-SoVITS?

GPT-SoVITS is an AI voice synthesis system that combines GPT-based language processing with SoVITS speech generation to create natural-sounding speech.

How does GPT-SoVITS generate speech?

It first processes text with a GPT model, then converts it into speech using the SoVITS synthesis engine, leveraging learned voice patterns.

Can GPT-SoVITS clone any voice?

Yes, it can replicate voices using short audio samples, but accuracy depends on the quality and amount of training data provided.

Is GPT-SoVITS better than traditional text-to-speech systems?

GPT-SoVITS produces more natural, expressive, and human-like speech compared to older rule-based or basic neural TTS systems.

What are the main uses of GPT-SoVITS?

It is widely used in content creation, virtual assistants, gaming, dubbing, and speech generation tools for accessibility.

Does GPT-SoVITS support multiple languages?

Yes, it can support multiple languages depending on the training data and model configuration used.

Is GPT-SoVITS safe to use for voice cloning?

It is safe when used ethically with proper consent, but misuse for unauthorized voice cloning can raise legal and ethical concerns.

Conclusion

GPT-SoVITS brings a major shift in AI voice generation by merging advanced language understanding with high-quality speech synthesis. The system processes text through GPT models to capture meaning and context, then converts it into natural speech using the SoVITS engine. This combination produces expressive, human-like voices with strong consistency and clarity.