GPT-SoVITS voice cloning has quickly become a powerful solution for generating natural-sounding synthetic voices with minimal training data. Content creators, developers, and AI enthusiasts now use this technology to replicate voices for narration, dubbing, and conversational AI systems. A common question arises during setup: how much audio is actually required to achieve high-quality voice cloning results?

Audio quantity plays a major role in voice quality, stability, and realism. Too little data can lead to robotic or inconsistent output, while too much unfiltered data may introduce noise and inefficiency during training. Understanding the right balance ensures better performance and faster model convergence.

This guide explains the optimal audio duration for GPT-SoVITS voice cloning, factors affecting training quality, and best practices for achieving professional-grade synthetic voices.

Understanding GPT-SoVITS Voice Cloning

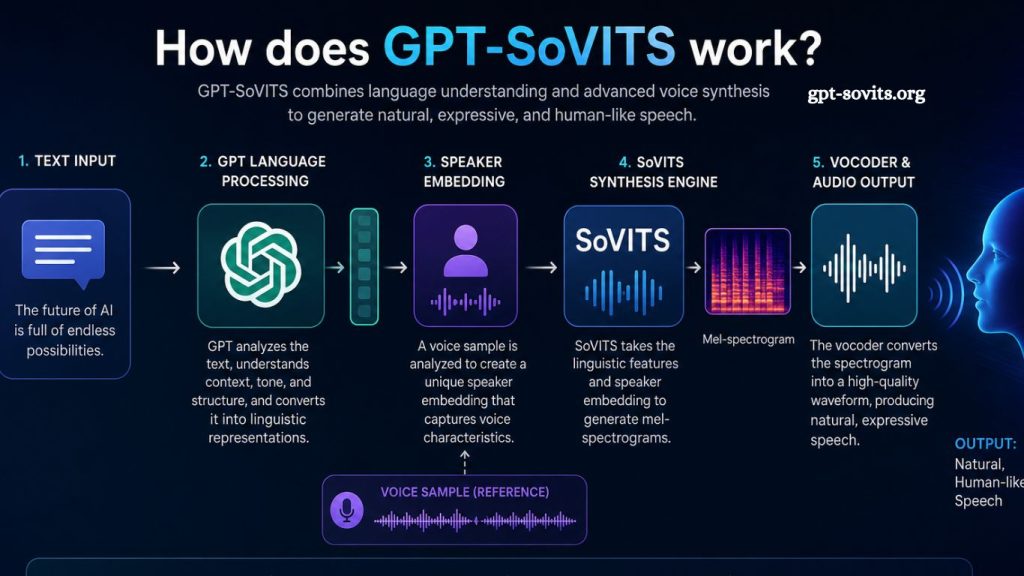

GPT-SoVITS combines advanced speech synthesis with deep learning-based voice conversion. The system learns speaker characteristics such as tone, pitch, accent, and speaking style from provided audio samples. Once trained, it generates speech that closely matches the original speaker’s identity.

SoVITS focuses on voice conversion quality, while GPT enhances linguistic fluency and contextual speech generation. Together, they create a hybrid model capable of producing expressive and realistic speech output.

Read More: GPT-SoVITS Voice Cloning Explained: Can It Clone Any Voice?

Training success depends heavily on the quality and quantity of input audio. Clean, well-segmented samples significantly improve cloning accuracy.

Minimum Audio Requirement for GPT-SoVITS

Voice cloning performance depends on training goals and desired output quality. Different use cases require different amounts of audio data.

Absolute Minimum Range (3–10 Minutes)

Basic voice cloning becomes possible with approximately 3 to 10 minutes of clean speech audio. This range supports quick testing and prototype generation.

Models trained on this dataset typically produce recognizable voices but may lack stability, emotional variation, and natural prosody. Small datasets also increase the risk of overfitting, where the model memorizes rather than generalizes speech patterns.

This range works best for experimentation rather than production use.

Recommended Baseline (20–60 Minutes)

A dataset between 20 and 60 minutes delivers significantly improved results. Speech output becomes more stable, natural, and expressive.

Training within this range allows the model to capture:

- Basic tone consistency

- Natural speaking rhythm

- Clear pronunciation patterns

- Limited emotional variation

Many developers consider this range the practical minimum for usable voice cloning applications. Quality increases noticeably compared to ultra-short datasets.

Professional Quality Range (1–3 Hours)

High-quality voice cloning typically requires 1 to 3 hours of clean and diverse audio. This range supports production-level applications such as audiobooks, AI assistants, and dubbing systems.

Models trained on larger datasets can capture:

- Detailed emotional expression

- Strong speaker identity consistency

- Better handling of long-form speech

- Reduced artifacts and distortions

Speech output becomes more stable across different sentences and contexts.

Advanced Training Range (3+ Hours)

Datasets exceeding 3 hours provide maximum voice fidelity. Large-scale training improves generalization and reduces synthesis errors in complex sentences.

Benefits include:

- Highly natural speech flow

- Strong adaptability to different prompts

- Better multilingual or accented speech handling

- Reduced need for post-processing

Such datasets are commonly used in commercial-grade voice AI systems.

Factors That Affect Audio Requirements

Audio duration alone does not determine the quality of voice cloning. Several critical factors influence training efficiency and output performance.

Audio Quality

Clean recordings dramatically reduce the amount of data needed. Background noise, echo, or distortion increases training difficulty and reduces model accuracy.

High-quality audio should include:

- Clear microphone capture

- Minimal environmental noise

- Consistent recording volume

- No overlapping speech

Clean audio often outperforms larger but noisy datasets.

Speaker Consistency

Voice cloning performs best when all samples belong to a single speaker with a consistent tone and style. Mixing different speakers confuses the model and reduces output accuracy.

Consistency ensures:

- Stable voice identity

- Predictable pronunciation

- Improved natural flow

- Sampling Diversity

Balanced datasets include different speaking styles such as:

- Neutral reading

- Emotional speech

- Questions and statements

- Varied sentence lengths

Diversity improves expressive range in generated speech.

Language Coverage

Multilingual datasets require more audio to achieve consistent results. Each language introduces new phonetic structures, increasing training complexity.

Single-language models require less data compared to multilingual systems.

Best Practices for GPT-SoVITS Audio Preparation

Proper preprocessing improves training efficiency and reduces the required audio duration.

Segment Audio Properly

Short clips between 3 and 10 seconds perform best. Long recordings reduce alignment accuracy and increase training errors.

Remove Background Noise

Noise reduction tools help isolate speech and improve clarity. Cleaner input reduces the need for large datasets.

Normalize Volume Levels

Consistent volume ensures stable model learning and prevents distortion during synthesis.

Use High-Quality Recording Equipment

Professional microphones or high-end mobile devices produce significantly better results than low-quality recording setups.

Common Mistakes in Audio Preparation

Many training issues come from avoidable errors during dataset creation.

Insufficient Audio Diversity

Using only one type of speech (such as monotone reading) limits model expressiveness.

Poor Audio Quality

Noisy recordings force the model to learn unwanted artifacts.

Inconsistent Speaker Identity

Mixing voices leads to unstable or incorrect voice cloning output.

Overloading with Unnecessary Data

Excessive low-quality audio increases training time without improving performance.

How Audio Length Impacts Voice Quality

Short datasets lead to fast but limited cloning results. Medium datasets balance efficiency and quality. Large datasets provide professional-grade synthesis with improved emotional depth and naturalness.

Model performance improves logarithmically rather than linearly. The first 10–30 minutes often contribute the most noticeable improvement. Additional hours refine accuracy and realism.

The optimal strategy involves starting with a clean 30–60-minute dataset and scaling up based on output requirements.

Real-World Use Cases

GPT-SoVITS voice cloning supports multiple industries and applications.

Content Creation

Creators use cloned voices for narration, storytelling, and video production.

Game Development

Developers integrate synthetic voices into character dialogue systems.

Education

Voice cloning helps generate multilingual learning materials.

Accessibility Tools

AI-generated speech helps visually impaired users access written content.

Customer Support

Businesses deploy cloned voices in automated response systems.

Frequently Asked Questions

How much audio is needed for GPT-SoVITS voice cloning?

GPT-SoVITS works with as little as 3–10 minutes of audio, but 20–60 minutes yields more stable, natural results.

Is 5 minutes of audio enough for voice cloning?

5 minutes is enough for basic testing, but the output may sound unstable or less realistic compared to larger datasets.

What is the best audio length for high-quality results?

1–3 hours of clean, consistent audio delivers professional-grade voice cloning with natural tone and expression.

Does audio quality matter more than duration?

High-quality, noise-free audio often improves results more than simply increasing dataset length.

Can GPT-SoVITS clone a voice with a few samples?

Yes, but limited samples reduce accuracy, emotional range, and voice stability in generated speech.

Do I need multiple speakers for training?

No, single-speaker consistent audio is recommended for accurate and stable voice cloning results.

Why does my cloned voice sound unnatural?

Unnatural output usually results from noisy audio, insufficient training data, or inconsistent recording quality.

Conclusion

GPT-SoVITS voice cloning delivers strong results when supported by sufficient clean, consistent audio data. Small datasets of 3–10 minutes enable quick experimentation, while 20–60 minutes improve stability and natural speech quality. Professional applications typically rely on 1–3 hours of well-prepared recordings to achieve a realistic tone, expression, and fluency. Larger datasets further enhance accuracy and adaptability across different contexts.